This project introduces a new paradigm for sound source localization referred to as virtual acoustic space traveling (VAST) and accompanies the public release datasets and code designed for this purpose.

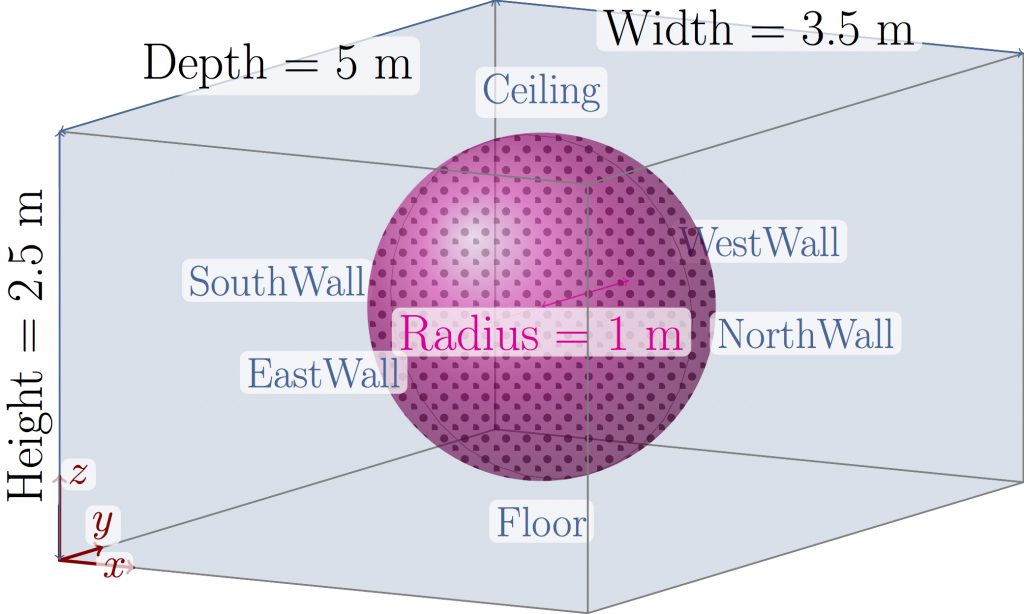

Most existing methods that estimate the position of a sound source or other audio geometrical properties are either based on an approximate physical model (physics-driven) or on a specific-purpose calibration set (data-driven). With VAST, the idea is to learn a mapping from audio features to desired geometrical properties using a massive dataset of simulated room impulse responses. The dataset is designed to be maximally representative of the potential audio scenes the considered system may be evolving in while remaining reasonably compact. The aim is to demonstrate the good generalizability of mappings learned on a virtual dataset to real-world data and to provide a useful tool for research teams interested in sound source localization.